Let's cut through the cybersecurity noise.

Every week there's a new breach, a new AI-powered hacking tool, a new "state-sponsored" campaign making headlines. Most coverage tells you what happened. Very little tells you what it actually means, where it's heading, and what deserves your full attention.

So here's what actually matters in 2026 — backed by hard numbers from the World Economic Forum, IBM, Gartner, Verizon, ISC2, and other organizations that measure the real-world threat landscape, not just write about it. The rules have changed. The playbook attackers used in 2023 is obsolete. What's replaced it is faster, more autonomous, and harder to detect than anything that came before.

Top cybersecurity trends going into 2026:

- Agentic AI as autonomous attacker — average adversary breakout time dropped to 48 minutes

- "Harvest Now, Decrypt Later" quantum threat — NIST's first post-quantum standards are now final

- Identity becomes the primary attack surface — 68% of breaches now involve the human/identity element

- AI supply chain poisoning — Single-agent compromise yields 87% systemic toxicity in <4 hours

- Geopolitical OT/ICS pre-positioning — 1 in 3 global energy infrastructure assets targeted

- Deepfake CEO fraud at scale — $25M lost in a single live video call deepfake attack

- Cloud-native attack escalation — 40% of breaches now involve cloud-stored data

- The AI-powered SOC closes the skills gap — 4.8 million unfilled cybersecurity jobs, AI filling the void

Let's get into it.

1. Agentic AI: The Attacker That Never Sleeps

The 2025 CrowdStrike Global Threat Report documented something that should have made front pages everywhere: the average adversary breakout time — the window between an attacker gaining initial access and moving laterally through your network — fell to 48 minutes. The fastest recorded instance in 2024? A mere 51 seconds.

That's not a human moving through your network. That's an AI.

This defines the 2026 threat landscape. Agentic AI — autonomous systems that reason, plan multi-step attacks, chain vulnerabilities, call APIs, and adapt in real time — has crossed a critical threshold. It's no longer being used to write malware. It is the attacker.

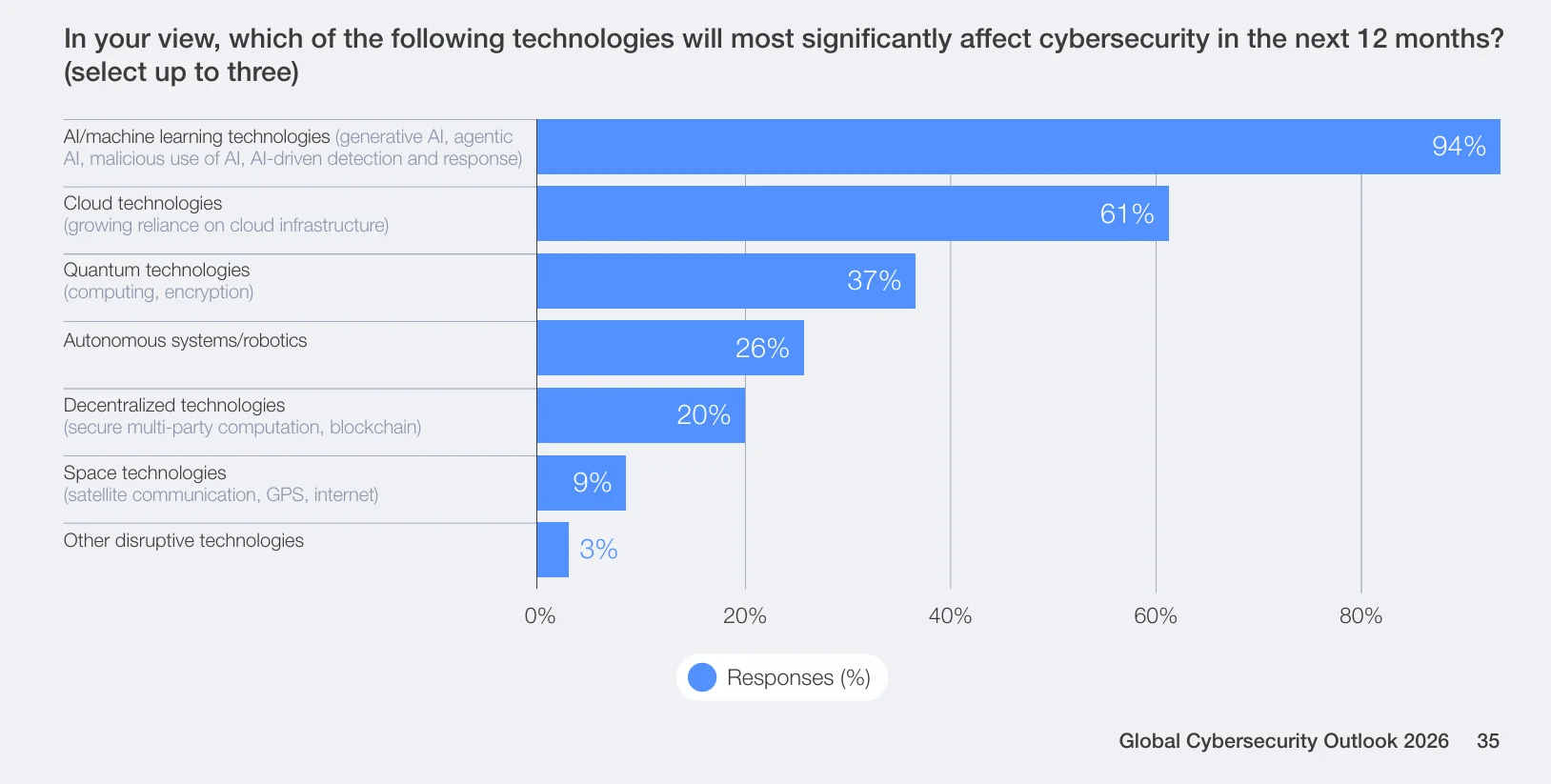

The WEF Global Cybersecurity Outlook 2026 identifies AI as the most consequential factor reshaping the threat landscape, with adversaries now targeting AI agents themselves — not just the humans behind them.

Microsoft's Digital Defense Report 2024 logged over 600 million cyberattacks per day — the vast majority automated, AI-assisted, and operating at machine speed. Traditional security operations, built around human response cycles of hours to days, are architecturally mismatched to this threat.

What's happening in the real world

CrowdStrike documented a new tier of threat actor in their 2025 Global Threat Report: fully automated intrusion operators running AI-assisted pipelines that autonomously identify targets, exploit vulnerabilities, and exfiltrate data — with no human operator actively managing the session. What previously required a skilled five-person Red Team can now be replicated by a single attacker with a configured AI agent.

Palo Alto Networks detected polymorphic malware in active 2025 campaigns — code that rewrites its own signature at each execution to evade detection. Their Unit 42 Threat Intelligence team confirmed AI-generated polymorphic payloads targeting financial services institutions, where traditional signature-based detection was entirely blind because the malware had never existed in that form before.

Google's Mandiant team published findings in M-Trends 2025 showing AI-powered spear-phishing campaigns achieving dramatically higher success rates than manually crafted attacks — because AI can generate perfectly contextual, grammatically flawless, personalized lures at industrial scale, sourcing context from LinkedIn profiles, public filings, and corporate communications.

Where this is heading

The arms race is AI vs. AI. Defenders who try to fight machine-speed attacks with human-speed security operations are structurally outmatched. Gartner projects that by 2027, 17% of all cyberattacks and data leaks will directly involve generative AI in the attack chain — up from negligible levels in 2024.

Enterprises that survive 2026 will be the ones deploying autonomous defense: AI that detects, triages, and responds without waiting for human approval on each action. (For a broader look at how enterprises are deploying agentic AI across business functions — and the infrastructure challenges that entails — see our generative AI trends for 2026.)

2. "Harvest Now, Decrypt Later" — The Quantum Time Bomb

Here's a threat that's uniquely unsettling: your encrypted data is being stolen right now — not to be read today, but to be decrypted in 3 to 5 years when quantum computers achieve sufficient capability. Everything your organization has encrypted with RSA or elliptic curve cryptography since 2020 is potentially sitting in an adversary's archive with an expiration date on its secrecy.

This is the HNDL attack — Harvest Now, Decrypt Later — and it's not theoretical.

WEF Global Cybersecurity Outlook 2026 identifies state-sponsored HNDL operations as one of the most consequential long-horizon threats to global digital infrastructure, with nation-state actors systematically building encrypted data stockpiles for future decryption.

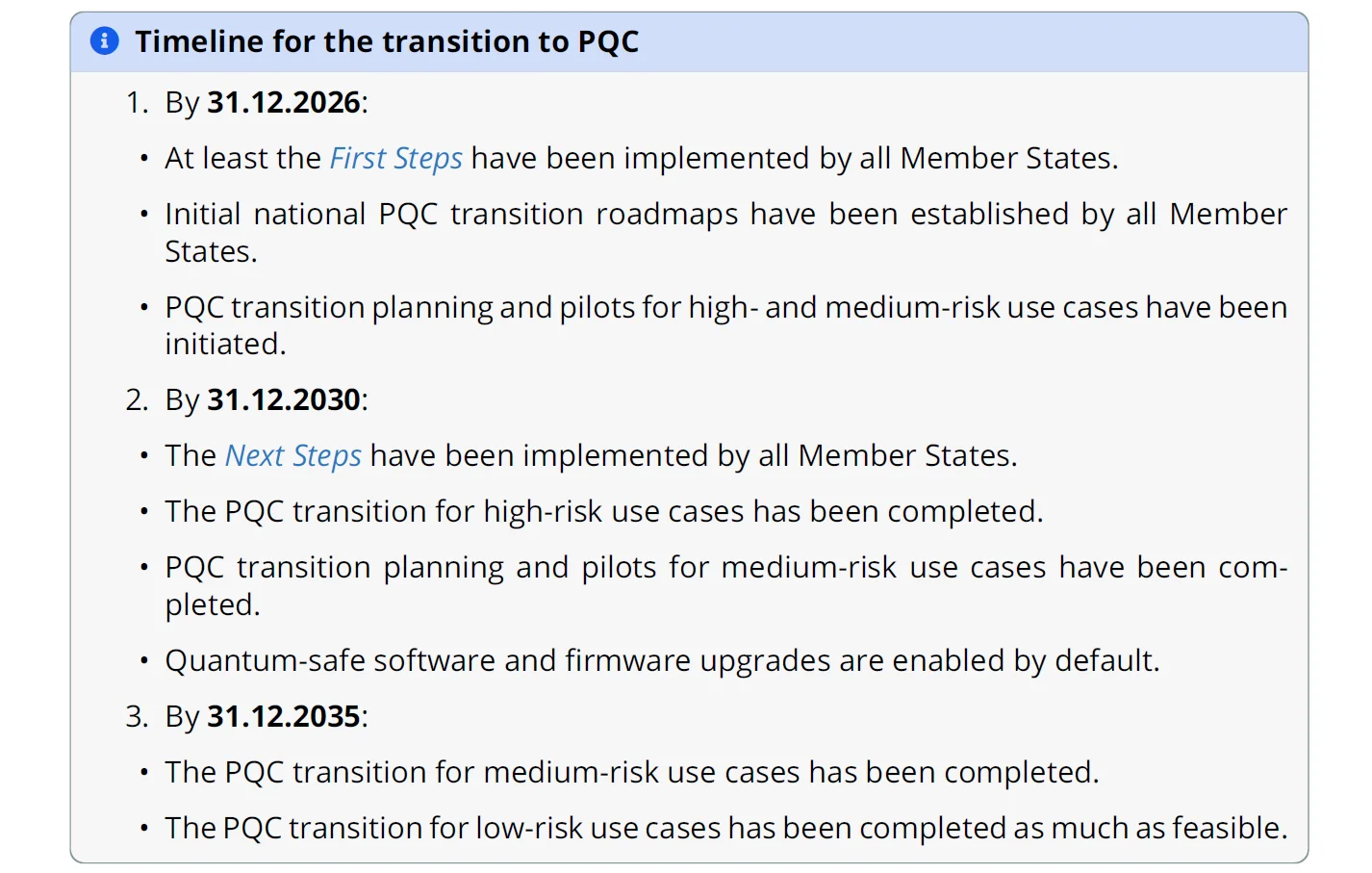

The National Institute of Standards and Technology finalized its first three post-quantum cryptography (PQC) standards in August 2024 — a direct acknowledgment that the threat timeline is now concrete.

Government mandates are accelerating: the EU requires member states to publish national PQC roadmaps in 2026, and US federal agencies are required to begin migrating away from classical encryption algorithms immediately. Cryptographic deprecation of RSA and ECC is expected by 2030; full disallowance by 2035.

That sounds like a long runway. It isn't. Migrating enterprise cryptography touches every piece of software, hardware, certificate, API, and protocol that encrypts data — a multi-year project for any organization of meaningful scale.

What's happening in the real world

IBM built their IBM Quantum Safe program specifically to address enterprise PQC migration. Their cryptography discovery tooling scans enterprise environments to map every instance of vulnerable encryption — certificates, key management systems, libraries, protocols — before migration begins. The platform is now deployed across major financial institutions, with IBM reporting that most clients discover 2-3x more cryptographic assets than their IT teams originally estimated.

Google has completed the migration of its internal infrastructure and core services to post-quantum cryptography. By defaulting to the finalized NIST standard ML-KEM (FIPS 203) across Chrome and Google Cloud, the company now secures the majority of its global traffic against 'harvest now, decrypt later' threats, effectively neutralizing the immediate risk of the quantum time bomb. This represents the largest-scale production deployment of PQC algorithms to date, operating across billions of daily connections.

Apple updated iMessage in 2024 to use PQC algorithms for key establishment through their PQ3 protocol — the first mass-consumer communication platform to deploy quantum-resistant encryption. Their design combines classical and post-quantum algorithms to protect against both current and future threats, setting a design precedent for consumer-grade cryptographic migration.

Where this is heading

HNDL attacks are happening regardless of organizational readiness. The theft is occurring now; the damage arrives later. Financial records, healthcare data, classified communications, M&A intelligence — anything encrypted with classical algorithms today has a known future exposure window.

Organizations that begin cryptographic migration now have a meaningful buffer. Those that wait until quantum capability makes the news will have already forfeited their data's long-term confidentiality.

3. Identity Is the New Perimeter — And It's Under Siege

The network perimeter is dead. VPNs, firewalls, and IP-based access controls were built for on-premise infrastructure. In a world of cloud-native applications, remote workforces, and autonomous AI agents operating across enterprise systems, identity is the only meaningful security boundary that remains — and attackers have fully internalized this.

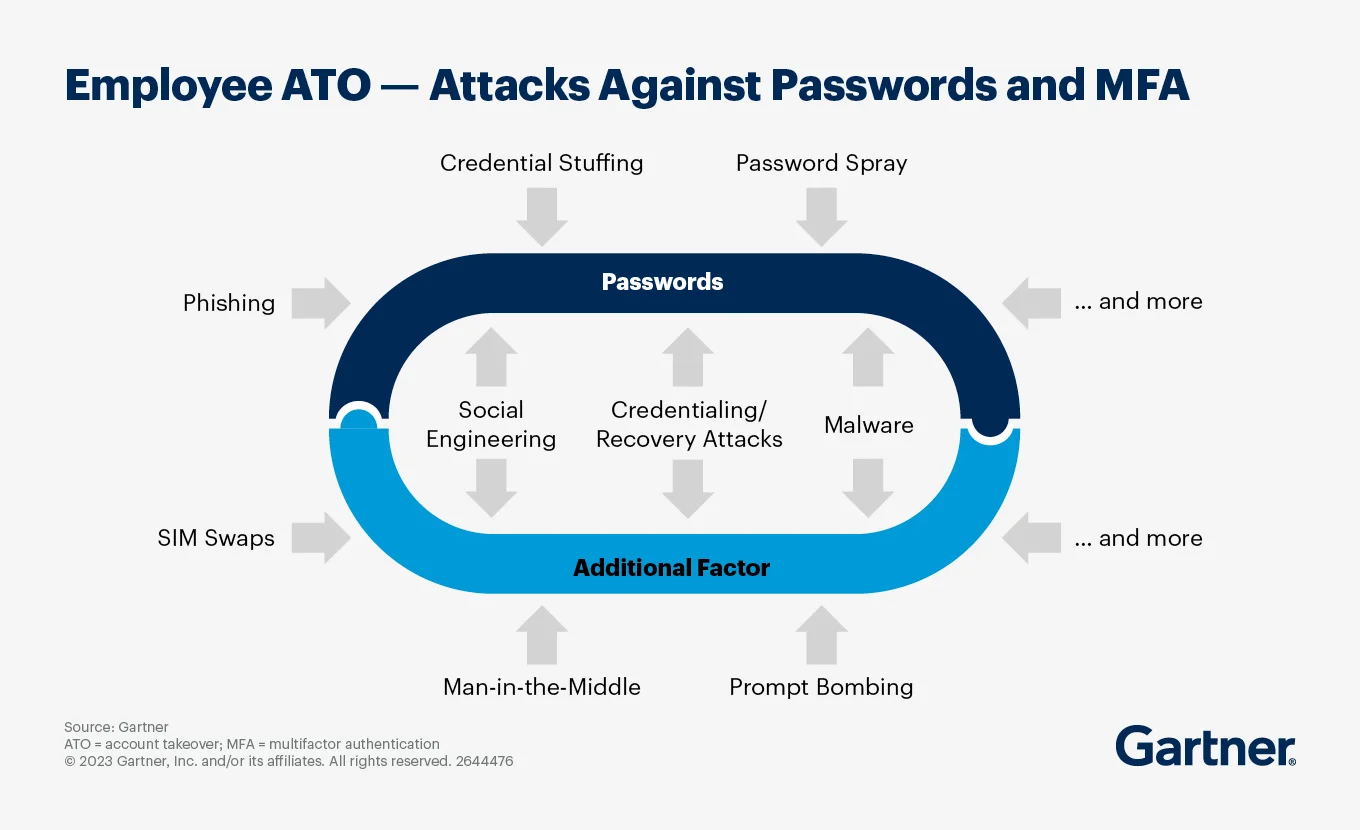

Verizon's 2024 Data Breach Investigations Report found that 68% of breaches involved a human element — credentials, social engineering, or privilege abuse. Credential theft has become the single most common initial access vector, outpacing both vulnerability exploitation and malware as the primary entry point.

The surface area has exploded beyond human accounts. Modern enterprises manage three categories of identity, each expanding rapidly:

- Human identities: Employees, contractors, third-party vendors — each with credentials, roles, and access scopes

- Machine identities: Service accounts, API keys, certificates, CI/CD tokens — often outnumbering human accounts 10-to-1 in cloud-native environments

- AI agent identities: Autonomous agents operating on behalf of users, accumulating permissions, calling external services, and making decisions — with minimal governance

Gartner identifies identity fabric immunity and identity threat detection and response (ITDR) as the top priorities for enterprise identity and access management programs in 2026 — a complete reorientation from perimeter-focused thinking.

What's happening in the real world

Microsoft processes over 78 trillion security signals per day through their identity security infrastructure, according to their Digital Defense Report 2024. Their data reveals a damning gap: enabling multi-factor authentication stops 99.9% of automated credential attacks — yet fewer than 50% of enterprise accounts have MFA enforced across all applications. The gap between "we have MFA" and "MFA is enforced everywhere" is where breaches live.

CyberArk's 2024 Identity Security Threat Landscape Report found that 93% of organizations experienced two or more identity-related breaches in the previous 12 months. The 2025 report emphasizes that machine identities now outnumber human credentials 82 to 1 — confirming that the explosion of service accounts, API keys, and automated processes has created an identity governance gap that most security programs haven't addressed.

Okta's threat intelligence team documented a surge in attacks specifically targeting identity providers and single sign-on (SSO) systems in their 2025 Secure Sign-in Trends Report. The pattern is consistent: attackers bypass the front door entirely, target the key management system, and once they hold a valid identity token, they're an authorized user by every system's logic — invisible to traditional intrusion detection.

Where this is heading

2026 is the year Zero Trust transitions from philosophy to operational mandate. The specific gap that most organizations haven't closed: non-human identity lifecycle management.

Most enterprises have no comprehensive inventory of their machine identities, no automated lifecycle management, and no privilege governance for them. Every new AI agent deployed without proper identity controls is a credential waiting to be compromised — and the number of AI agents per enterprise is doubling annually.

4. AI Supply Chain Poisoning — The Invisible Backdoor

This is the most underestimated threat on this list. And it may already be inside your deployed models.

Traditional software supply chain attacks are visible: malicious code gets injected into a library, behavior deviates from expectations, anomaly detection flags it. AI supply chain attacks work completely differently. A poisoned model behaves identically to a legitimate one under all normal conditions. The backdoor activates only on a specific trigger input — making it extraordinarily difficult to detect with standard security tooling that watches for behavioral anomalies.

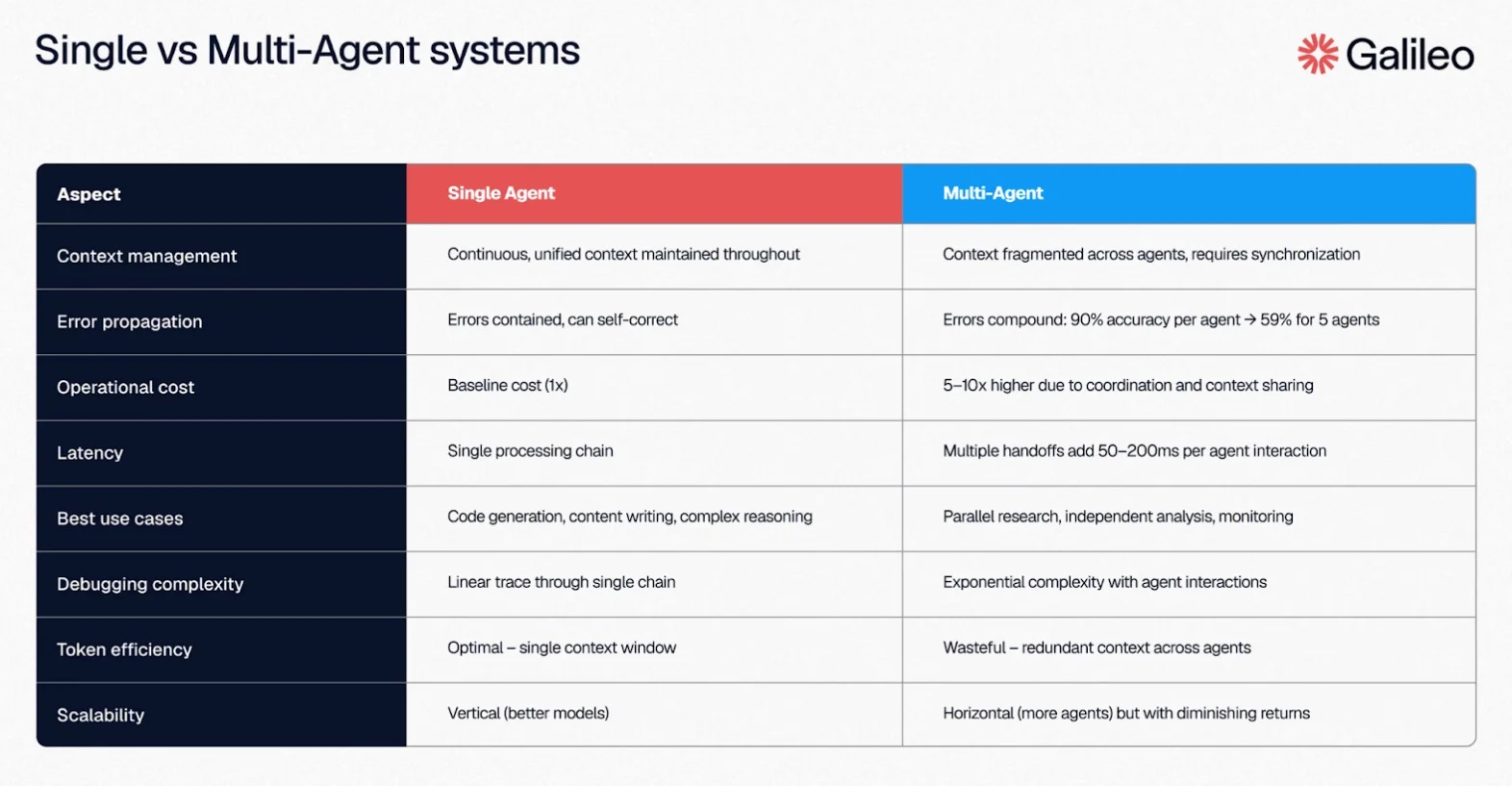

A 2025 analysis by Galileo AI on multi-agent system failures found that a single compromised agent could poison 87% of downstream decision-making within just 4 hours. These 'cascading failures' represent a new class of supply chain risk where a breach in one specialized tool or API invisibly propagates through an entire enterprise AI ecosystem.

MITRE's ATLAS framework — the adversarial AI counterpart to ATT&CK — documents four primary attack vectors against AI supply chains:

- Training data poisoning: Injecting corrupted or misleading examples into datasets before model training, embedding hidden behaviors that activate on specific inputs

- Model weight manipulation: Altering model parameters during or after training to introduce backdoors while preserving normal performance metrics

- Plugin and extension compromise: Backdooring tools and integrations that AI agents call during operation

- Agent action library poisoning: Corrupting reusable action modules that agentic systems chain together to execute complex tasks

What's happening in the real world

HiddenLayer, the AI security firm, published research demonstrating that popular open-source model weights hosted on public repositories contained embedded malicious payloads that survived serialization and deserialization. The attack required no exploitation — just downloading and loading what appeared to be a legitimate, highly-rated model. Their scanner found malicious artifacts in models with thousands of downloads and high community ratings.

NVIDIA's Product Security team documented "model card spoofing" attacks on public repositories — adversaries uploading near-identical copies of popular models with subtly altered weights, accumulating high download counts before detection. The models passed all standard performance benchmarks; the malicious behavior was triggered exclusively by specific input sequences designed by the attacker.

Protect AI found that over 10% of publicly available machine learning models scanned in their 2024 AI/ML Security Report contained known vulnerabilities or suspicious artifacts — with the highest concentration in models distributed without cryptographic signatures or provenance attestation. No signing, no traceability, no supply chain integrity.

Where this is heading

Enterprises racing to deploy AI agents are doing near-zero supply chain vetting on the models, frameworks, and plugins they adopt. "Where did this model come from and who handled it?" is as critical in 2026 as "where did this npm package come from?" was in 2017. We know how that story played out.

Expect AI model provenance, signing, and attestation standards to become regulatory requirements in financial services, healthcare, and critical infrastructure within the next 24 months.

5. Geopolitical Cyber Warfare and the OT/ICS Siege

Something fundamentally changed in 2024–2025 that most organizations still haven't internalized: nation-state cyber operations stopped being primarily about espionage and shifted toward operational pre-positioning. The goal is no longer just stealing intelligence — it's quietly establishing dormant access inside critical infrastructure to be activated at a geopolitically chosen moment.

PwC's analysis of OT security risk puts the scale plainly: more than a third of global energy and utilities infrastructure has already experienced cyber pre-positioning activity — quiet access, operational mapping, and data collection by AI-assisted nation-state adversaries who are not there to steal, but to wait.

The IT/OT convergence problem makes this especially dangerous. Industrial control systems (ICS) and SCADA systems were designed decades ago for operational efficiency — not security. They now connect to IT networks and increasingly to the internet, carrying the full attack surface of modern IT with almost none of the defensive tooling.

Dragos' OT Cybersecurity Year in Review tracked 26 distinct threat groups actively targeting industrial control systems by early 2026 — up from 13 in 2023. The most capable groups are specifically focused on power grids, water treatment facilities, and natural gas infrastructure.

What's happening in the real world

Dragos documented VOLTZITE — a sophisticated threat group specifically targeting electric utilities, oil and gas, and telecommunications infrastructure — in their 2025 Year in Review. VOLTZITE's operations focus on long-term persistent access and intelligence collection rather than immediate disruption, consistent with the pre-positioning pattern seen across multiple nation-state actors. Dragos confirmed active VOLTZITE intrusions across multiple US electric utilities.

Fortinet's 2025 OT Security Report surveyed 550 OT security professionals globally and found that 73% of OT organizations experienced at least one intrusion in the past year — and that 31% of intrusions originated at IT/OT convergence points, confirming that digital transformation initiatives connecting industrial systems to corporate networks are creating the attack paths adversaries are actively exploiting.

Honeywell's 2025 USB Threat Report found that threats targeting OT environments increased 46% year over year, with a pronounced shift toward living-off-the-land tactics — using legitimate industrial protocols and tools to conduct reconnaissance, making detection significantly harder in environments with minimal security monitoring and no behavioral baseline.

Where this is heading

The WEF Global Cybersecurity Outlook 2026 explicitly identifies geopolitical fractures as the defining context for this year's threat landscape. Nation-states are treating civilian infrastructure as active strategic terrain — not just intelligence targets.

The sectors most at risk beyond energy: water treatment, food and agriculture, transportation logistics, and healthcare. A coordinated disruption across several of these simultaneously would cause maximum civilian impact with deniable attribution.

6. The CEO Doppelgänger — Deepfake Fraud Goes Live

In January 2024, employees at Arup — one of the world's largest engineering and design firms — joined a video call with their CFO and several senior colleagues. Based on instructions given in the meeting, they approved and processed a $25 million wire transfer.

The CFO wasn't there. Neither were the colleagues. Every person on that call — except the victim — was a real-time AI-generated deepfake.

That case marked a turning point: real-time video deepfakes of executives, indistinguishable in live settings, are now operationally viable for sophisticated threat actors. This isn't a future threat. It already happened.

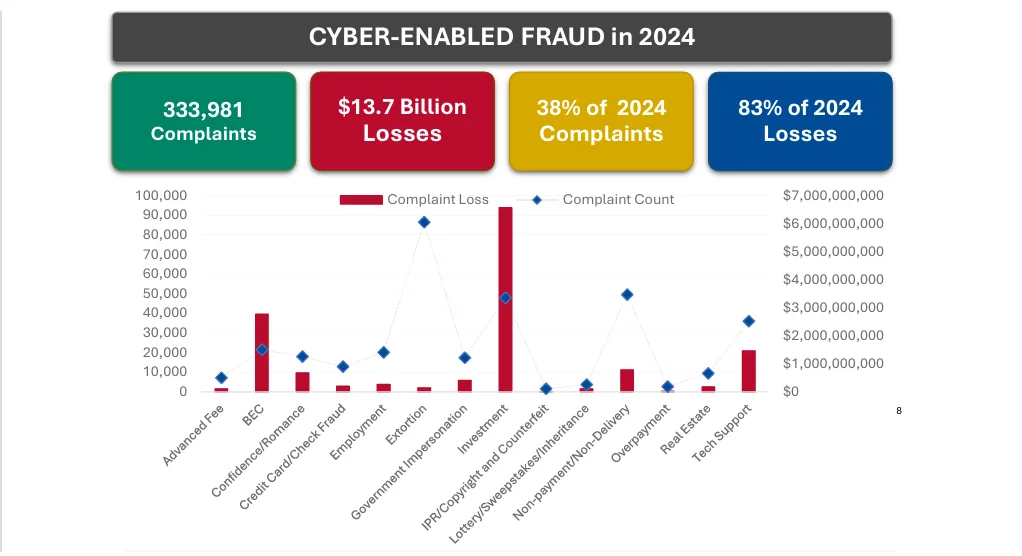

The FBI's Internet Crime Complaint Center (IC3) 2024 Annual Report documented Business Email Compromise and identity fraud as the highest-dollar cybercrime categories — totaling $2.77 billion in US losses in a single year, with AI-augmented social engineering accelerating both volume and average transaction size per incident.

Gartner projects that 30% of enterprises now consider their existing identity verification and authentication solutions unreliable due to AI-generated deepfakes — a direct acknowledgment that current authentication models weren't designed for this threat class.

What's happening in the real world

Ferrari narrowly avoided a deepfake fraud attempt in 2024 when a senior executive received WhatsApp messages impersonating CEO Benedetto Vigna, complete with AI voice cloning that matched his tone and cadence. The attempt failed only because the target asked a personal question the AI couldn't answer — something Vigna had mentioned in a private conversation. Ferrari subsequently revised all authentication protocols for executive-level financial approvals, requiring out-of-band verification regardless of medium.

Pindrop's 2025 Voice Intelligence and Security Report found that AI-synthesized voice audio now appears in a measurable percentage of enterprise call center contacts — and that current voiceprint biometric authentication systems fail to detect synthetic audio at a rate that is operationally significant for high-value verification scenarios. Financial institutions are the primary targets.

Microsoft's security team documented deepfake-assisted spear-phishing campaigns in their Digital Defense Report 2024, where AI-cloned audio messages impersonating board members were used to bypass standard verification checkpoints in wire transfer authorization workflows at financial services and energy sector targets.

Where this is heading

Any verification method that relies on voice recognition, video appearance, or communication style is structurally compromised. What's replacing them: out-of-band verification (a separate, pre-established communication channel for high-value instructions), code word systems (pre-arranged phrases not shared digitally), and process-level controls (no single-person authorization for financial transactions above a threshold, regardless of who requests it).

The organizations ahead of this curve have stopped treating "it sounds and looks like them" as sufficient evidence of identity.

7. Cloud-Native Attack Escalation — The Attacker's New Home Turf

Here's a number that should reframe every security team's priorities: according to the IBM Cost of a Data Breach Report 2024, 40% of all data breaches involved data stored in cloud environments — public cloud, private cloud, or across multiple clouds. The cloud isn't just where your apps run. It's where your risk concentrates.

Attackers have studied this. And a critical shift has occurred: sophisticated adversaries are no longer primarily trying to force their way into cloud environments. They're exploiting the staggering complexity of how cloud environments are configured. IAM policy misconfigurations, overpermissioned service accounts, publicly exposed storage buckets, misconfigured API gateways — these create attack paths that require no vulnerability exploitation at all.

Wiz's 2025 State of Cloud Security Report found that 72% of cloud environments had at least one publicly exposed, highly sensitive workload — databases, secrets managers, or storage buckets containing credentials or PII. More critically: 35% of organizations had a critical vulnerability exploitable by an unauthenticated external attacker through a public-facing cloud service. That's not a misconfiguration problem. That's a structural exposure.

What's happening in the real world

Wiz documented what they call the "Toxic Cloud Trilogy" across hundreds of enterprise cloud environments: the simultaneous combination of a critical vulnerability, an overprivileged identity, and a public exposure. When all three conditions exist together, an unauthenticated attacker has a complete path from the internet to sensitive data. Their 2025 data found this combination present in 35% of cloud environments scanned — more than one in three.

Cloudflare's 2025 DDoS Threat Report recorded that total DDoS attacks more than doubled (121% increase) in 2025. Specifically, hyper-volumetric attacks (those exceeding 1 Tbps or 100M pps) surged by over 500% in certain quarters of 2025. Hyper-volumetric DDoS attacks specifically targeting cloud-native application infrastructure, are designed not just to overwhelm bandwidth, but to exploit cloud auto-scaling mechanisms, driving compute costs skyward while availability degrades. Additionally, a new attack category emerged in 2025: ransom DDoS, where attackers threaten sustained cloud cost amplification campaigns unless paid.

Google Cloud’s 2026 Cybersecurity Forecast highlights a sobering shift: misconfigurations and identity gaps remain more pervasive entry points than the exploitation of software vulnerabilities. Industry-wide data from 2025 shows that over 60% of organizations suffer cloud incidents rooted in these preventable errors. The takeaway: cloud security in 2026 is primarily a configuration governance problem, not a patching problem. You can patch every CVE and still be critically exposed through a single permissive IAM role.

Where this is heading

Cloud-Native Application Protection Platforms (CNAPP) are the fastest-growing enterprise security category — precisely because they address the root problem: no team can manually audit the configuration state of thousands of cloud resources across multiple providers in real time.

Automated, continuous posture management with drift detection isn't a premium capability anymore. In 2026, it's the security baseline. (For a deeper look at how cloud-native infrastructure is evolving across engineering teams — including containers, Kubernetes adoption, and the complexity challenges that create security exposure — see our software development trends for 2026.)

8. The AI-Powered SOC — Closing the 4.8-Million-Job Gap

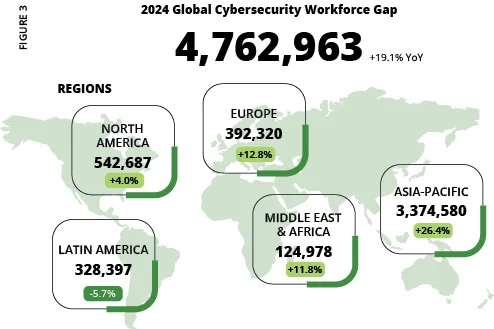

The cybersecurity skills shortage has reached a structural ceiling. ISC2's 2024 Cybersecurity Workforce Study measured the global shortfall at 4.8 million unfilled cybersecurity positions — a gap that has barely moved despite years of training programs, university partnerships, and government workforce initiatives. There simply aren't enough qualified practitioners to staff the security operations that modern enterprises require.

This creates a dangerous asymmetry: attackers are using AI to multiply their capabilities. Defenders are constrained by headcount.

The answer isn't hiring faster — it's making each existing analyst dramatically more capable. The IBM Cost of a Data Breach Report 2024 measured the concrete impact: organizations that had deployed AI and automation extensively in their security operations identified and contained breaches 108 days faster than organizations without — and saved an average of $2.22 million per breach in reduced impact costs. That's not a marginal efficiency gain. That's a categorical risk reduction.

What's happening in the real world

Microsoft's Security Copilot — their AI assistant integrated across the Microsoft security stack — generates natural language summaries of attack chains, recommended response playbooks, and threat context from correlated signals that would take analysts hours to assemble manually. Early adopter data published by Microsoft shows analysts using Security Copilot resolve security incidents 22% faster and are 7% more accurate across all tasks compared to working without AI assistance.

Darktrace's autonomous response platform now handles 95% of detected threats without requiring human authorization — autonomously blocking malicious connections, quarantining affected accounts, and containing lateral movement, then surfacing a structured summary to analysts after containment. Their 2025 published data shows AI autonomous response containing attacks in an average of 2 seconds — compared to 60+ minutes for human-led response workflows.

SentinelOne's Purple AI platform allows security analysts to query their entire environment in plain language — "show me all processes that established external connections to this IP range in the last 30 days and haven't been seen before" — and receive structured, actionable results without writing complex SIEM queries. The practical result: analysts at the junior level can conduct investigations that previously required senior-level expertise, multiplying effective team capacity without adding headcount.

Where this is heading

Gartner projects that by 2028, the majority of security operations centers will rely on AI-automated triage and response for tier-1 incidents — with human analysts focused exclusively on complex investigations, novel threat scenarios, and strategic decisions that require contextual judgment AI hasn't developed.

The 4.8-million-person gap won't close through training. It'll close by making every existing analyst 10x more effective.

Organizations that don't invest in AI-augmented security operations in 2026 will face a compounding structural disadvantage as the gap between AI-empowered attackers and under-resourced defenders continues to widen.

The Common Thread

Read across all eight trends and a single pattern emerges: the attack surface has stopped being primarily technical and become fundamentally systemic.

Traditional cybersecurity was built around a containable model — patch the vulnerabilities, harden the perimeter, block known signatures. You could, in theory, enumerate the threats and address them methodically. That model is structurally broken.

The threats defining 2026 operate at machine speed (agentic AI), move through legitimate-seeming identities (credential theft, deepfakes), hide inside trusted components (AI supply chain), and pre-position silently across critical infrastructure for months before activation (OT/ICS campaigns). They exploit the complexity of modern organizations — not isolated technical weaknesses that can be patched.

What this means concretely:

Speed is now a security capability in itself. Organizations that detect and respond in minutes operate in a fundamentally different risk category than those that measure response in days. The 108-day faster detection time from IBM's data isn't a metric — it's the difference between a contained incident and a catastrophic breach.

Identity governance is the most urgent infrastructure project on any CISO's list. If your organization can't answer "who — or what — has access to what, and why?" across human accounts, machine identities, and AI agents simultaneously, you're operating blind in the category where most breaches originate.

Cryptographic migration can't be a 2027 problem. If you handle data that needs to remain confidential for more than five years, HNDL attacks mean you're already inside the exposure window. The migration starts now or the decision is made for you.

AI in your stack is only as trustworthy as its supply chain. Every model, plugin, and agent framework adopted without provenance verification is a potential attack vector that your existing security tooling wasn't built to see.

The organizations that emerge from 2026 with a strong security posture won't be the ones that spent the most on point solutions. They'll be the ones that recognized the nature of the threat had fundamentally changed — and changed their strategy, not just their tools, to match.

Want to spot emerging trends before they hit mainstream? Check out our guide on how to identify market trends or explore what's gaining traction on our trends dashboard.